Tous les articles de notre série “Mesurer l’agilité” :

- #1 Mesurer l’agilité : qu’est-ce que cela signifie ?

- #2 Mesurer l’agilité : une bonne idée ?

- #2 Mesurer l’agilité : une bonne idée ?

- #3 Mesurer l’agilité : quels pièges éviter ? (1)

- #4 Mesurer l’agilité : quels pièges éviter ? (2)

- #5 Mesurer l’agilité : que mesurer ? (1)

- #6 Mesurer l’agilité : que mesurer ? (2)

- #7 Mesurer l’agilité : le mot de la fin

Paul comprend un peu mieux ce que veut dire “mesurer l’agilité”. Mais une autre réflexion vient désormais hanter son esprit : comment va être perçu le fait qu’il cherche à obtenir des mesures pour un certain nombre d’indicateurs autour de l’agilité ?

Et cela lui fait un peu peur. Paul est manager de trois équipes, chacune étant composée de six à huit personnes. Et même si ce sont des personnes sympathiques, il faut admettre qu’elles ont l’air d’évoluer dans un monde un peu à part, à parler d’auto-organisation, de management horizontal, à faire des rôtis en pleine réunion ou à se réunir autour d’une étoile de mer deux fois par mois.

Image par StockSnap de Pixabay

Car comme nous le précisions dans notre précédent article, Paul dispose déjà d’un tableau de bord avec des indicateurs assez classiques. Mais alors qu’il est autonome pour les mesurer, en ayant accès aux estimations réalisées en début de projet, aux temps imputés par chacun, au reste à faire de chaque tâche, il va désormais devoir s’appuyer sur les personnes de son équipe pour être capable de mesurer ces nouvelles valeurs.

Paul craint que ses équipes perçoivent comme du flicage de nouveaux indicateurs du niveau d’agilité, et que cela génère des réactions hostiles. Comment présenter cela avec sérénité, et comment va-t-il être reçu ?

La mesure, étape indispensable à l’amélioration ?

Qui dit agilité, dit amélioration continue. Amélioration de nos pratiques, de nos postures, et idéalement, de nos résultats. Et comment réellement percevoir une amélioration si on ne compare pas un “avant” avec un “après” ?

C’était peut-être l’avis d’un certain William Edwards Deming, statisticien, auteur, professeur et ingénieur américain du 20ᵉ siècle, lorsqu’il a présenté au Japon la fameuse roue qui porte désormais son nom, la roue de Deming, aussi connue sous le nom de cycle de Shewhart : le PDCA, pour Plan – Do – Check – Act.

Image par Karn Bulsuk issue de Wikipédia sous licence CC BY 4.0

Dans un modèle cyclique, itératif, empirique, l’objectif est de prévoir un minimum de choses, de les mettre en application, de vérifier le résultat et d’agir en conséquence pour améliorer les choses en vue du prochain cycle. C’est une approche radicalement différente de l’approche séquentielle, type cycle en V, dans laquelle nous prévoyons tout dès le départ, puis nous exécutons le plan.

L’étape “Check” a une importance cruciale, et nous pourrions mettre derrière ce mot la capacité d’un individu, d’une équipe, d’une organisation, à adopter un point de vue rétrospectif, pourquoi pas en comparant l’état actuel avec la cible que nous cherchons à atteindre. Si cette cible est tangible, mesurable, alors notre positionnement actuel devrait pouvoir l’être également.

Nous reviendrons sur la précision de l’appellation “Check” dans un prochain article.

La mesure dans les approches agiles

Dans la même veine, les trois piliers de Scrum ne sont-ils pas la transparence, l’inspection et l’adaptation ? Inspection qui pourrait tout à fait consister en la mesure d’un certain nombre d’indicateurs sur lesquels nous sommes transparents, et en leur comparaison avec notre cible, afin de vérifier que nous allons dans la bonne direction, et de pouvoir nous adapter.

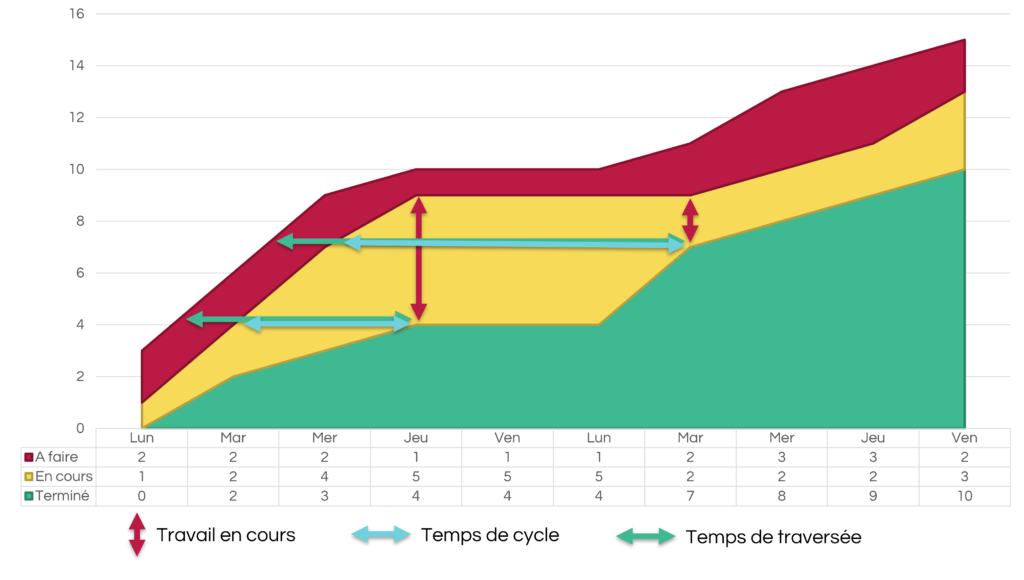

D’autres approches telles que Kanban pour l’IT font aussi la part belle aux indicateurs. Temps de traversée, temps de cycle, cartes de contrôle… autant d’informations qui doivent nous permettre, en tant qu’équipe, de donner de la visibilité, de limiter la variabilité de notre processus… et de nous adapter si les indicateurs ne sont pas suffisamment “bons”.

Image issue des supports de formation SmartView

La notion de vélocité que la plupart des équipes Scrum utilisent et que Martin Fowler, co-auteur du livre Planning Extreme Programming avec Kent Beck, créateur de XP, présente comme étant une pratique issue d’Extreme Programming, est également une mesure.

En bref, être agile, cela ne signifie pas faire les choses “à l’arrache” ou sans prêter attention de façon régulière au résultat de notre travail et à notre performance. L’inattention aux résultats est d’ailleurs un des dysfonctionnements majeurs d’une équipe tels que les a décrits l’auteur américain Patrick Lencioni dans son livre The Five Dysfunctions of a Team.

De tout temps, les équipes agiles ont mesuré un certain nombre d’indicateurs, pas par vanité, pas pour faire plaisir à un manager, mais dans une optique d’amélioration de leurs pratiques, processus et postures.

Mesurer l’agilité, une bonne idée ?

En ce sens, cela ne devrait choquer personne que l’on cherche à mesurer un certain nombre d’indicateurs au niveau d’une équipe ou d’une organisation. Pour être performante, une personne, une équipe, une organisation, devrait se fixer un minimum d’objectifs et être capable de les atteindre, en vérifiant régulièrement (par exemple via la mesure) si elle est sur le bon chemin.

C’est malheureusement ce qui manque cruellement aux initiatives de type cycle en V aujourd’hui : cette capacité à lever la tête du guidon pendant quelques minutes pour se poser la question de ce qui marche bien, ce qui marche moins bien, et comment améliorer ce qui doit l’être.

Non, nous avons un plan pensé en amont, que nous “déroulons” pendant des mois, indépendamment des indicateurs que nous mesurons, car si nous commençons à les regarder de trop près, nous pourrions être tentés de poser le stylo pour corriger notre trajectoire… ce qui remettrait en question l’intégralité du plan. Toute ressemblance avec des faits réels (survenant en ce moment-même) est évidemment fortuite !

Image par PublicDomainPictures sur Pixabay

Le fait de vouloir mesurer un certain nombre d’indicateurs ne devrait donc pas gêner les équipes de Paul… si tant est que Paul et ses équipes réalisent ces mesures pour de bonnes raisons, et que les résultats sont analysés avec le bon prisme. Ce sera le thème du troisième article de notre série.

La suite au prochain épisode

Dans quels pièges ne pas tomber ? Quels indicateurs mesurer ? Restez connectés pour être mis au courant des prochains articles sur le sujet.